Automating Legal Defense: How AI Can Parse Court Documents

Legal Disclaimer

This content is for educational and informational purposes only. It is not intended as legal advice, and should not be construed as such. The information provided does not create an attorney-client relationship. Legal matters are complex and fact-specific. Always consult with a qualified attorney licensed in your jurisdiction for advice on your specific legal situation. The author and D613 Labs are not responsible for any legal consequences resulting from the use of information discussed in this content.

Introduction: The Entropy of Litigation

Imagine a scenario familiar to any forensic data engineer or e-discovery specialist: A class-action lawsuit hits a major pharmaceutical client. The discovery phase initiates, and suddenly, you are staring down the barrel of 500,000 PDF pages comprising court orders, motion filings, depositions, and archaic contract scans. The legal team has 72 hours to identify every instance of a specific liability clause and correlate it with judge rulings from the past decade.

Manual review is a statistical impossibility. It is an engineering failure state. The human error rate in large-scale document review hovers between 20% and 30%, a signal-to-noise ratio that would be unacceptable in any physical system. Yet, this is the standard operating procedure in the legal industry.

As technical professionals, we must view legal texts not as prose, but as unstructured datasets governed by specific syntactic protocols—much like a legacy codebase or a complex signal needing demodulation. The challenge is not merely "reading" text; it is extracting structured logic from an unstructured medium. We are attempting to reduce the entropy of the legal system.

This post explores the physics of language models applied to the legal domain. We will move beyond high-level abstractions and dissect the mathematical machinery—specifically Vector Space Models and Transformer architectures—that allows machines to parse legal vernacular. We will write Python code to ingest PDFs, perform Named Entity Recognition (NER) on case law, and utilize cosine similarity to find relevant precedents. We are automating the defense.

Theoretical Foundation: The Geometry of Law

To understand how AI parses legal documents, we must abandon the linguistic view of words and adopt a geometric one. In Applied Physics, we often deal with high-dimensional phase spaces. Natural Language Processing (NLP) treats language similarly.

Vector Space Models & Embeddings

At the core of modern legal AI is the distributional hypothesis, which states that linguistic items with similar distributions have similar meanings. We map discrete words into a continuous vector space , where is typically between 768 and 4096 (depending on the model architecture like BERT or GPT-4).

If we represent a legal concept, say "Tort," as a vector , and "Negligence" as , the semantic relationship between them can be quantified using the angle between these vectors in the hyperspace. The metric of choice is Cosine Similarity:

In a well-trained legal model, the vector for "Breach of Contract" will be nearly parallel (high cosine similarity) to "Non-performance," while orthogonal (zero similarity) to unrelated concepts like "Capital Gains Tax."

The Transformer Architecture: Attention is All You Need

While Recurrent Neural Networks (RNNs) treat text as a time-series sequence (similar to signal processing), they fail at long-range dependencies—a critical flaw for legal documents where a definition on page 2 defines a liability on page 40.

The Transformer architecture solves this via the Self-Attention Mechanism. It calculates a relevance score for every word pair in a sequence, effectively weighing the importance of context. Mathematically, for a query , key , and value , the attention matrix is computed as:

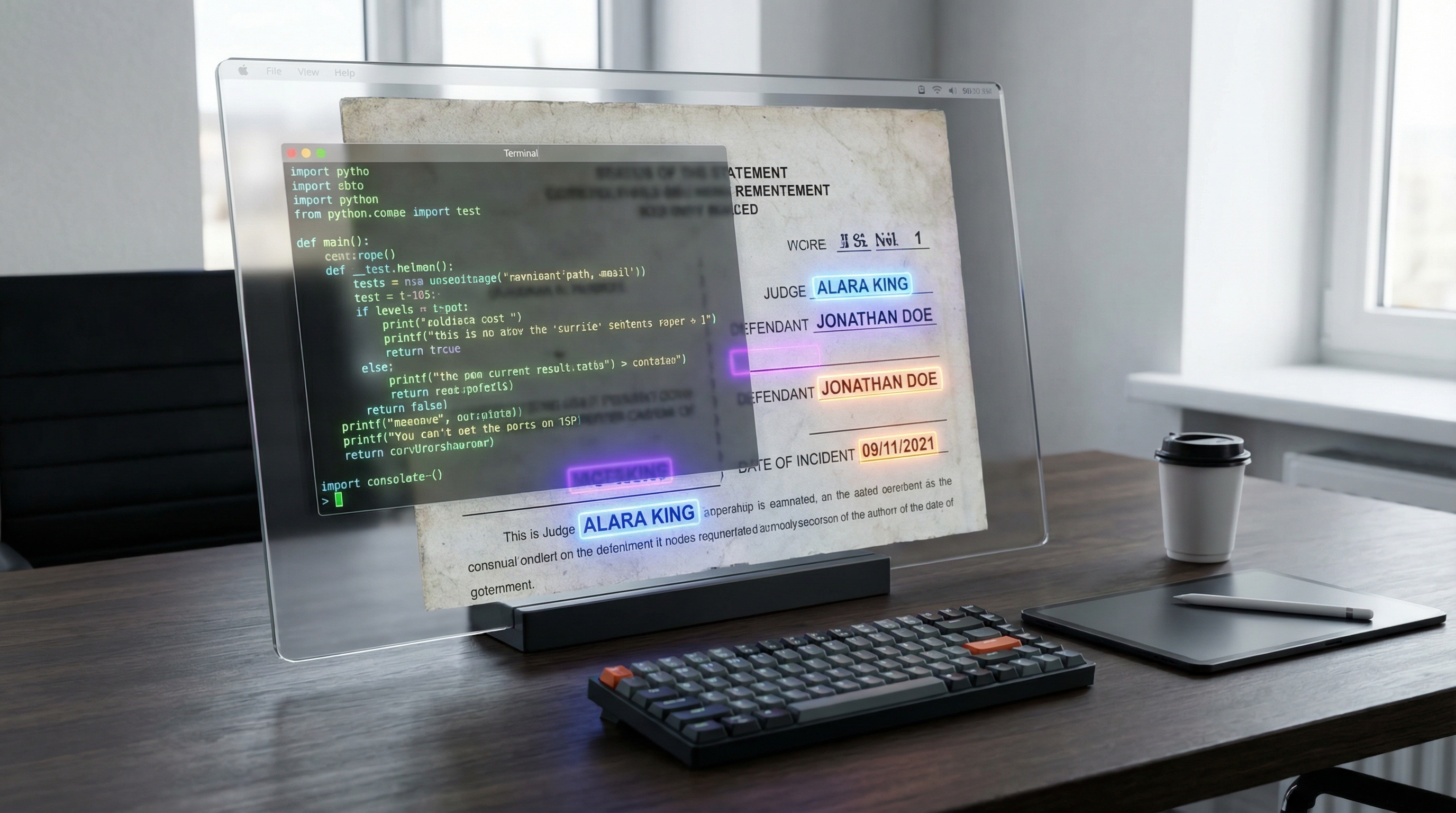

Here, acts as a scaling factor to prevent vanishing gradients during backpropagation. In the context of a court document, this mechanism allows the model to "attend" to the word "Defendant" and strongly associate it with a specific name mentioned three paragraphs prior, ignoring the intervening noise. This is the computational equivalent of a lawyer highlighting relevant clauses with a marker, but performed in parallel across thousands of dimensions.

Implementation Deep Dive

We will now construct a pipeline to ingest court documents, clean the artifacts, and extract entities using a fine-tuned Transformer model. We will utilize Python, the transformers library by Hugging Face, and spacy.

Prerequisites

Ensure you have the following installed:

pip install transformers torch spacy PyPDF2 numpy

Step 1: Ingestion and Optical Character Recognition (OCR) Cleanup

Legal PDFs are notoriously dirty. They often contain scanned images, line numbers (pleading paper), and headers/footers that break semantic flow. We need a robust pre-processor.

import re import PyPDF2 from typing import List, Optional class LegalDocIngestor: def __init__(self, filepath: str): self.filepath = filepath self.raw_text = "" def extract_text(self) -> str: """ Extracts text from PDF and performs initial cleaning. Handles pleading papers by removing line numbers. """ try: reader = PyPDF2.PdfReader(self.filepath) text_content = [] for page in reader.pages: text = page.extract_text() if text: text_content.append(text) self.raw_text = " ".join(text_content) return self._clean_artifacts(self.raw_text) except Exception as e: raise RuntimeError(f"Failed to process PDF: {str(e)}") def _clean_artifacts(self, text: str) -> str: """ Regex filtering to remove legal formatting noise. """ # Remove pleading line numbers (1-28 typically on left margin) # Matches number at start of line followed by whitespace text = re.sub(r'(^| )\s*\d+\s+', ' ', text) # Remove header/footer patterns (e.g., Case No. ...) text = re.sub(r'Case\sNo\.\s[A-Za-z0-9-]+', '', text) # Normalizing whitespace (physics analogy: signal smoothing) text = re.sub(r'\s+', ' ', text).strip() return text # Usage # ingestor = LegalDocIngestor("./court_filing_v1.pdf") # clean_text = ingestor.extract_text()

Step 2: Named Entity Recognition (NER) for Legal Entities

Standard NER models identify "Persons" and "Organizations." For legal defense, we need specific entities: PLAINTIFF, DEFENDANT, STATUTE, JUDGE, and DOCKET_ID. We will use a pipeline based on a BERT model fine-tuned on legal corpora (like nlpaueb/legal-bert-base-uncased).

from transformers import AutoTokenizer, AutoModelForTokenClassification from transformers import pipeline import torch def extract_legal_entities(text: str, threshold: float = 0.85): """ Parses text to identify legal actors and statutes. Uses a specialized Legal-BERT model. """ # Load model specifically trained on legal text (case law, contracts) model_name = "nlpaueb/legal-bert-base-uncased" try: tokenizer = AutoTokenizer.from_pretrained(model_name) model = AutoModelForTokenClassification.from_pretrained(model_name) # Initialize NER pipeline ner_pipeline = pipeline( "ner", model=model, tokenizer=tokenizer, aggregation_strategy="simple", device=0 if torch.cuda.is_available() else -1 ) # Processing in chunks because BERT has a 512 token limit # This is a sliding window approach max_len = 512 results = [] for i in range(0, len(text), max_len): chunk = text[i : i + max_len] entities = ner_pipeline(chunk) # Filter by confidence score (Signal strength threshold) valid_entities = [ { "entity": ent['entity_group'], "word": ent['word'], "score": float(ent['score']) } for ent in entities if ent['score'] > threshold ] results.extend(valid_entities) return results except Exception as e: print(f"Model inference failed: {e}") return [] # Example Output: # [{'entity': 'DEFENDANT', 'word': 'Corp', 'score': 0.98}, ...]

Step 3: Semantic Search for Precedent Retrieval

This is the most powerful application. We convert the incoming court document into a vector embedding and search our database of past rulings to find semantically similar cases. We use sentence-transformers.

from sentence_transformers import SentenceTransformer, util import numpy as np class PrecedentFinder: def __init__(self): # 'all-mpnet-base-v2' maps sentences to a 768 dimensional dense vector space self.model = SentenceTransformer('all-mpnet-base-v2') self.case_database = [] # List of text summaries of past cases self.case_vectors = None def index_database(self, case_texts: List[str]): """ Vectorizes the database. In production, store this in Pinecone or Milvus. """ self.case_database = case_texts # Convert corpus to vectors self.case_vectors = self.model.encode(case_texts, convert_to_tensor=True) def find_relevant_cases(self, query_document: str, top_k: int = 3): """ Performs Cosine Similarity search in vector space. """ # Vectorize the query query_embedding = self.model.encode(query_document, convert_to_tensor=True) # Compute cosine similarity scores # effectively: dot_product(A, B) normalized cos_scores = util.cos_sim(query_embedding, self.case_vectors)[0] # Extract top k matches top_results = torch.topk(cos_scores, k=top_k) results = [] for score, idx in zip(top_results[0], top_results[1]): results.append({ "score": score.item(), "case_text": self.case_database[idx][:200] + "..." # Preview }) return results # usage # finder = PrecedentFinder() # finder.index_database(["Case A text...", "Case B text..."]) # matches = finder.find_relevant_cases("Plaintiff claims breach of NDA regarding IP...")

Advanced Techniques & Optimization

While the code above works for proof-of-concept, deploying this in a high-stakes legal environment requires rigorous optimization and handling of edge cases.

Handling the Context Window Limit

One of the primary pitfalls in legal AI is the Token Limit. Standard BERT models cap at 512 tokens (roughly 400 words). Court documents are often 50+ pages. Simply truncation is unacceptable; you might cut off the Order section.

Solution: Sliding Window with Stride. Instead of chunking text arbitrarily, implement a sliding window with an overlap (stride). If the window size is 512, use a stride of 256. This ensures that context at the boundaries of chunks is preserved. Alternatively, use architectures like Longformer or BigBird, which utilize sparse attention mechanisms to handle sequences up to 4096 tokens, significantly reducing the dimensionality reduction loss.

Quantization and Latency

Law firms require speed. Running full-precision (FP32) BERT models is computationally expensive. We can apply Quantization, reducing the weights from 32-bit floating-point to 8-bit integers (INT8). This introduces a quantization noise, but in NLP tasks, the accuracy drop is often negligible (<1%), while inference speed can improve by 4x. This is analogous to signal compression where we discard imperceptible frequencies.

Retrieval-Augmented Generation (RAG)

Don't rely on the LLM to "know" the law. LLMs hallucinate. Instead, use a RAG architecture. Store all case law in a Vector Database (like Pinecone or Weaviate). When a query comes in, first retrieve the top 5 relevant chunks of real law using vector similarity, and then feed those chunks into the LLM as a context constraint. This forces the model to act as a synthesizer of facts rather than a generator of fiction.

Real-World Applications

1. Automated Due Diligence in M&A

In mergers and acquisitions, thousands of contracts must be reviewed for "Change of Control" provisions. An AI system can parse 10,000 contracts in hours, flagging only the 5% that require human review, calculating the risk exposure based on the semantic intensity of the indemnity clauses.

2. Predictive Litigation Analytics

By analyzing the historical rulings of a specific judge, we can construct a probability distribution of outcomes. If Judge Smith has ruled in favor of "Motion to Dismiss" in 85% of patent troll cases with similar vector embeddings to your current case, the defense strategy should aggressively pivot to dismissal.

3. Redaction and Privilege Logs

AI can identify Personally Identifiable Information (PII) or Privileged Communications (Attorney-Client privilege) with higher accuracy than regex keyword searches. By understanding the semantic context of a conversation, the model can flag an email as privileged even if it doesn't contain the word "Confidential," simply based on the advice-giving structure of the language.

External Reference & Video Content

In the accompanying video "AI in Legal Tech," the discussion centers on the transition from rule-based systems (Boolean search) to probabilistic systems (Vector search). The video highlights a critical industry shift: Legal Tech is no longer about digitizing paper; it is about computational law.

The presenter demonstrates how Large Language Models (LLMs) are essentially acting as reasoning engines. A key takeaway is the concept of "Hallucination Mitigation"—a major focus of the RAG architecture we discussed. The video serves as a high-level validation of the technical pipeline we built, emphasizing that the barrier to entry is no longer coding ability, but domain-specific data engineering. It reinforces that while the math (transformers) is universal, the application (law) requires rigorous validation pipelines.

Conclusion & Next Steps

We have traversed from the entropy of unstructured legal data to the ordered geometry of vector spaces. By applying principles of applied physics—treating words as vectors and context as an attention field—we can automate the most tedious aspects of legal defense.

The key takeaways are:

- Text is Data: Treat court documents as signals to be processed, denoised, and structured.

- Vectors over Keywords: Semantic search (Cosine Similarity) vastly outperforms keyword search (Boolean logic).

- Context is King: Use models like Longformer or sliding-window strategies to respect the long-form nature of legal arguments.

Next Steps: To advance this system, integrate a Vector Database (like Milvus) to handle millions of case precedents. Experiment with Fine-Tuning (PEFT/LoRA) on open-source legal datasets like Pile-of-Law to create a model that understands Latin legal maxims and jurisdiction-specific nuances. The future of law is computational; build the compiler.

Neural Networks and Deep Learning - 3Blue1Brown

Understanding neural networks and deep learning fundamentals, which form the basis for AI-powered document analysis and natural language processing in legal tech applications.