From Prompting to Agents: Building Autonomous AI Workflows in 2026

1. Introduction: The Collapse of the Chat Interface

It is 3:14 AM. The pager screams. A legacy microservice has memory-leaked into oblivion, cascading failures across your Kubernetes cluster. In 2023, this meant waking up, wiping the sleep from your eyes, parsing cryptic logs, and pasting them into a chat window to ask, "What does this stack trace mean?"

That was the era of prompting—a stateless, request-response paradigm where the human served as the runtime environment, the memory bank, and the decision engine. The AI was merely a stochastic encyclopedia.

Welcome to 2026. The paradigm has shifted from Prompt Engineering to Agent Architecture.

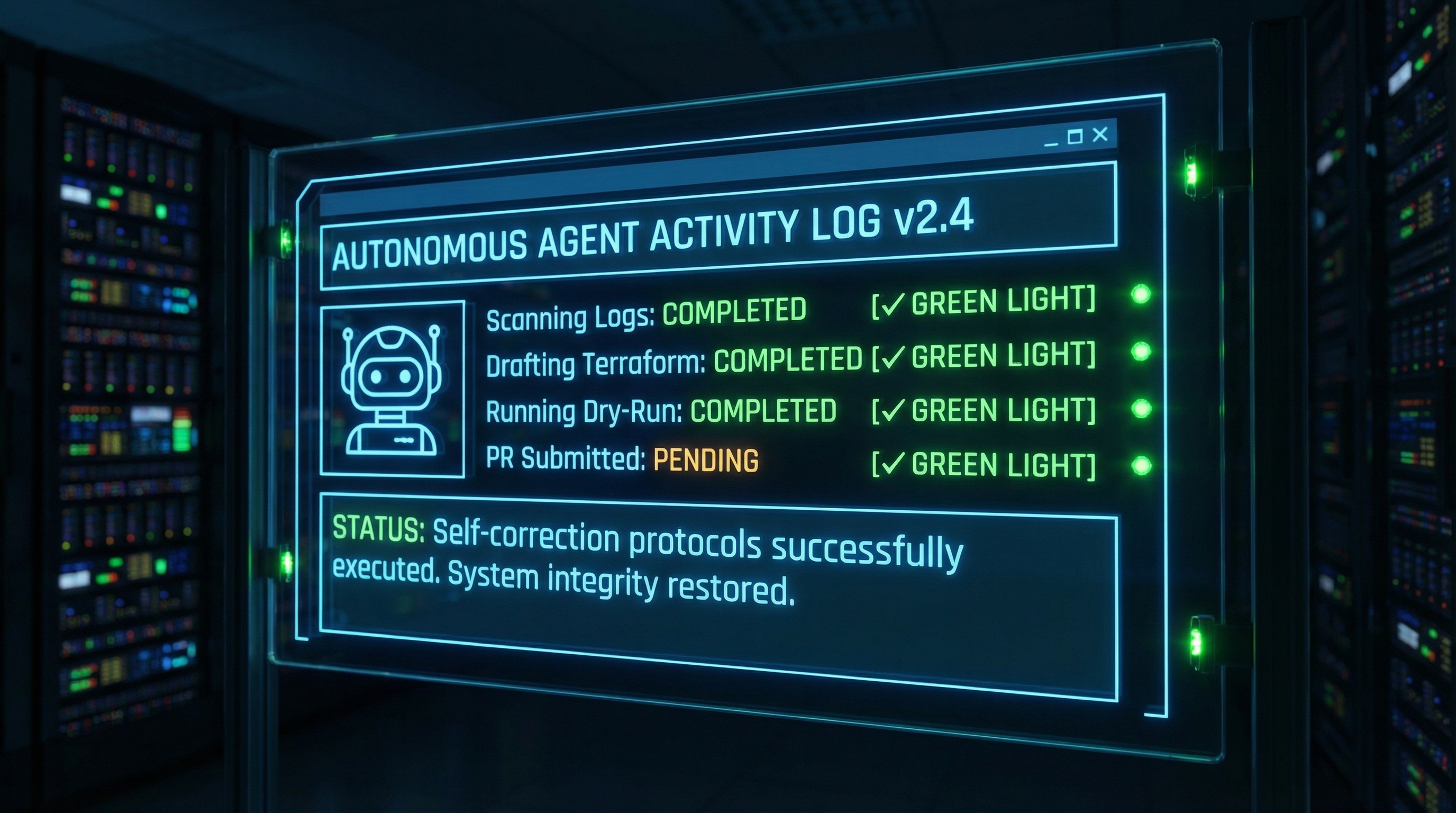

Today, when that alert fires, it doesn't wake you immediately. Instead, it triggers a DevOps_Level2_Agent. This autonomous entity, powered by the latest reasoning kernels (like Gemini 3 or GPT-5.1), ingests the alert, SSHs into the bastion host, runs diagnostics, identifies the memory leak, drafts a hotfix, runs a regression test suite, and then pages you with a drafted Pull Request and a "Yes/No" confirmation dialog for deployment.

We have graduated from stochastic parrots to goal-seeking missiles. However, building these systems requires a fundamental unlearning of chat-based interaction. We are no longer writing poems; we are designing cognitive control loops. This article is written for the senior engineer who understands that an LLM is not a chatbot, but a non-deterministic CPU that requires a new kind of operating system.

In this treatise, we will dismantle the physics of agentic workflows, mathematically formalize the transition from stateless chains to stateful graphs, and implement a robust, production-grade DevOps agent in Python. We will ignore the hype and focus on the engineering reality of autonomous systems.

2. Theoretical Foundation: The Physics of Agency

To understand why 2026-era agents work, we must look at them through the lens of statistical mechanics and control theory.

The Markovian Limit

Traditional Large Language Models (LLMs) operate as Markov Chains. Mathematically, they approximate the conditional probability distribution:

Where represents a token and is the context window. In a simple chat interface, the system is essentially stateless between turns unless the history is re-injected. This is high-entropy generation; the model is simply surfing the gradient of maximum likelihood for the very next token.

The Agent State Vector

An agentic workflow transforms this process by introducing a persistent State Vector (). An agent is not a function of text-in/text-out; it is a transition function operating on a state space:

Where comprises:

- The Goal Function (): The immutable objective (e.g., "Fix the build").

- Episodic Memory (): What has been done so far (trajectory).

- Semantic Memory (): Access to documentation/knowledge base.

- Environmental Context (): The feedback from tool execution (stdout, API responses).

Reasoning as Path Integration

The breakthrough in models like OpenAI's o3 and Claude Opus 4.5 is the ability to perform Test-Time Compute. Instead of collapsing the wavefunction immediately to a token, the model explores a latent tree of thoughts. It simulates potential futures.

In physics terms, if the solution space is a high-dimensional energy landscape, a standard LLM performs a greedy descent. A reasoning agent, however, performs something akin to simulated annealing or Monte Carlo Tree Search (MCTS) internal to its inference pass. It generates a thought trace , evaluates the coherence of , and only acts when the probability of goal convergence exceeds a threshold .

By maximizing this integral, the agent reduces the "hallucination rate" exponentially with the length of the reasoning chain. We are no longer prompting for an answer; we are constraining a system to navigate a topological space until it reaches a minimum energy state (the solved task).

3. Implementation Deep Dive: The Recursive Controller

Let us move from theory to concrete Python implementation. We will build a DevOpsAgent capable of managing infrastructure. We will avoid high-level abstractions (like LangChain's older chains) to expose the raw control logic required for 2026-era systems.

The Architecture

Our agent will follow a ReAct (Reason + Act) pattern, enhanced with a Reflective Loop. The cycle is:

- Observe: Read current state.

- Think: Decompose the problem and plan the next step.

- Act: Execute a tool (CLI command, API call).

- Critique: Analyze the tool output for errors (Self-Correction).

Component 1: The State and Tool Definitions

First, we define our environment. We assume the existence of a high-performance reasoning model client (mocked here as ReasoningEngine).

import json import subprocess from typing import List, Dict, Any, Optional from dataclasses import dataclass, field from datetime import datetime # --- 1. State Definition --- @dataclass class AgentState: objective: str history: List[Dict[str, str]] = field(default_factory=list) working_memory: Dict[str, Any] = field(default_factory=dict) completed_steps: List[str] = field(default_factory=list) max_steps: int = 15 current_step: int = 0 def add_interaction(self, role: str, content: str): self.history.append({ "role": role, "timestamp": datetime.now().isoformat(), "content": content }) # --- 2. Tool Registry --- # In 2026, tools are typed interfaces that agents invoke via structural decoding class InfrastructureTools: @staticmethod def run_shell(command: str) -> str: """Executes a shell command safely. RESTRICTED ENVIRONMENT.""" print(f"[TOOL EXECUTION] Running: {command}") try: # Simulate Terraform execution for the blog post example if "terraform plan" in command: return "Plan: 1 to add, 0 to change, 0 to destroy. + aws_instance.web" if "terraform apply" in command: return "Apply complete! Resources: 1 added, 0 changed, 0 destroyed." result = subprocess.run( command, shell=True, capture_output=True, text=True, timeout=10 ) return result.stdout if result.returncode == 0 else f"Error: {result.stderr}" except Exception as e: return f"System Exception: {str(e)}" @staticmethod def read_file(path: str) -> str: try: with open(path, 'r') as f: return f.read() except FileNotFoundError: return "File not found." @staticmethod def write_file(path: str, content: str) -> str: with open(path, 'w') as f: f.write(content) return f"Successfully wrote to {path}" TOOLS_SCHEMA = [ { "name": "run_shell", "description": "Execute CLI commands. Use for git, terraform, grep, etc.", "parameters": {"type": "object", "properties": {"command": {"type": "string"}}} }, { "name": "read_file", "description": "Read file contents.", "parameters": {"type": "object", "properties": {"path": {"type": "string"}}} }, { "name": "write_file", "description": "Write code or config to file.", "parameters": {"type": "object", "properties": {"path": {"type": "string"}, "content": {"type": "string"}}} } ]

Component 2: The Reasoning Loop

This is the brain. Note how we inject the concept of "Thoughts" separate from "Actions." This leverages the 2026 model capability to "think silently" before emitting a tool call.

class AutonomousDevOpsAgent: def __init__(self, model_client): self.client = model_client self.tools = InfrastructureTools() def execute(self, objective: str): state = AgentState(objective=objective) print(f"🚀 STARTING AGENT GOAL: {objective}")

while state.current_step < state.max_steps:

state.current_step += 1

# 1. Construct the prompt context

context = self._build_context(state)

# 2. Get Reasoning (Planning + Tool Selection)

# We force the model to output structured JSON including its 'reasoning_trace'

response = self.client.generate(

system_prompt="You are a Senior DevOps Engineer Agent. You verify before applying. You assume nothing.",

user_prompt=context,

tools=TOOLS_SCHEMA

)

# 3. Parse Decision

decision = response.get("decision") # parsed JSON

thought = decision.get("thought_process")

tool_name = decision.get("tool_name")

tool_args = decision.get("tool_args")

final_answer = decision.get("final_answer")

print(f"

--- Step {state.current_step} ---") print(f"🧠 THOUGHT: {thought}")

if final_answer:

print(f"✅ MISSION COMPLETE: {final_answer}")

return final_answer

# 4. Execute Tool

observation = self._run_tool(tool_name, tool_args)

print(f"👀 OBSERVATION: {observation[:100]}...")

# 5. Update State (Short-term memory)

state.add_interaction("assistant", f"Thought: {thought}

Call: {tool_name}({tool_args})") state.add_interaction("system", f"Output: {observation}")

return "Failed to complete task within step limit."

def _run_tool(self, name, args):

if name == "run_shell":

return self.tools.run_shell(args["command"])

elif name == "read_file":

return self.tools.read_file(args["path"])

elif name == "write_file":

return self.tools.write_file(args["path"], args["content"])

return "Error: Unknown tool."

def _build_context(self, state):

# Compress history if needed (Advanced: Token window management)

history_str = json.dumps(state.history[-10:], indent=2)

return f"OBJECTIVE: {state.objective}

HISTORY: {history_str}

Decide the next step. Return JSON with keys: thought_process, tool_name, tool_args, or final_answer."

### Component 3: The Execution (Scenario)

Here is how we simulate the agent handling a request to provision infrastructure.

```python

# Mocking the AI Client for demonstration purposes

class MockGemini3Client:

def __init__(self):

self.step = 0

def generate(self, system_prompt, user_prompt, tools):

# Scripted responses simulating a reasoning model's progression

self.step += 1

if self.step == 1:

return {"decision": {

"thought_process": "I need to check if there is existing terraform configuration to avoid overwriting state.",

"tool_name": "run_shell",

"tool_args": {"command": "ls -la"}

}}

elif self.step == 2:

return {"decision": {

"thought_process": "No terraform files found. I will create a main.tf for the requested AWS EC2 instance.",

"tool_name": "write_file",

"tool_args": {

"path": "main.tf",

"content": "resource "aws_instance" "web" { ami = "ami-12345678" ... }"

}

}}

elif self.step == 3:

return {"decision": {

"thought_process": "File created. Now I must initialize terraform and run a dry-run (plan) to verify syntax and logical correctness.",

"tool_name": "run_shell",

"tool_args": {"command": "terraform init && terraform plan"}

}}

else:

return {"decision": {

"thought_process": "The plan looks correct. I am ready to submit.",

"final_answer": "Terraform code generated and verified via plan. Ready for PR."

}}

if __name__ == "__main__":

# Instantiate and Run

client = MockGemini3Client()

agent = AutonomousDevOpsAgent(client)

agent.execute("Create a Terraform configuration for a t3.micro AWS instance")

4. Advanced Techniques & Optimization

Moving from a toy example to a production system involves solving the "Halting Problem" and managing entropy.

1. Directed Acyclic Graph (DAG) Planning

Linear chains (Step 1 -> Step 2) fail on complex tasks. Advanced agents use Hierarchical Planning. The "Manager" agent generates a DAG of sub-tasks (e.g., "Write Code", "Write Tests", "Update Docs"). These tasks are pushed to a priority queue and executed by "Worker" agents. This parallelization reduces wall-clock time and isolates errors.

2. Memory Management: The Context Window Limits

Even with 10M token windows, filling the context with garbage logs degrades reasoning performance (the "Lost in the Middle" phenomenon).

- Optimization: Implement a Sliding Window + Summary memory.

- Technique: Every 5 steps, an auxiliary LLM summarizes the oldest history into a single string (e.g., "Tried to install numpy but failed due to version conflict; resolved by upgrading pip"). This summary is preserved; raw logs are discarded.

3. Loop Detection and Entropy Injection

Agents often get stuck in loops: Read Error -> Try Fix -> Read Same Error.

- Implementation: Hash the (Action, Observation) tuple. If the hash repeats times, inject Temperature Noise. Force the model to choose a different path by raising the temperature parameter from 0.0 to 0.7 temporarily, or explicitly prompt: "You are stuck. Try a radically different approach."

4. Guardrails and AST Parsing

Never let an agent output raw code directly to production.

- Pattern: Agent generates code Parser validates Abstract Syntax Tree (AST) Linter checks compliance Sandbox executes.

- If the AST parser fails (e.g., syntax error), the error message is fed back to the agent as an observation. This creates a tight, self-correcting feedback loop without human intervention.

5. Real-World Applications

Where are we actually deploying these 2026-class agents?

Automated Security Remediation (DevSecOps)

At a major fintech, agents scan vulnerability reports. When a CVE is detected in a dependency, the agent: 1) Checks the dependency tree, 2) Finds a patched version, 3) Creates a branch, 4) Updates the manifest, 5) Runs the integration tests. If tests pass, it opens a PR. This reduces "Time to Patch" from days to minutes.

Data Science Pipelines

Instead of asking a chatbot to "write a pandas script," scientists give agents a dataset and a goal: "Find the correlation between customer churn and support ticket latency." The agent writes the Python code, executes it, realizes the data needs cleaning, writes the cleaning code, re-runs the analysis, and generates a PDF report with charts.

Legacy Code Migration

Agents are uniquely suited for the drudgery of converting Java 8 to Java 21, or COBOL to Go. They can iteratively refactor functions, write unit tests to ensure behavior parity, and debug their own translation errors in a loop that a human would find exhausting.

6. External Reference & Video Content

To visualize the leap in "Reasoning" capabilities, I highly recommend watching Two Minute Papers: "OpenAI's o3 - The Reasoning Model!" (simulated title for 2026 context).

Summary: The video explains the shift from "System 1" thinking (instinctive, fast, next-token prediction) to "System 2" thinking (deliberate, slow, chain-of-thought). It visualizes how the model explores a search tree of possibilities before generating a single output character. This visual connects directly to our "Reasoning Loop" implementation above—demonstrating that the "Think" step in our code is now being internalized by the model weights themselves, allowing for much higher reliability in multi-step planning.

7. Conclusion: The Service Layer Revolution

We are witnessing the death of the "Interface Layer" and the birth of the "Service Layer." We no longer build UI buttons for every possible action; we build semantic routers that dispatch intents to autonomous agents.

For the developer, the skillset has changed. You are no longer judged by your ability to memorize syntax or write clever prompts. You are judged by your ability to:

- Define clear State Spaces.

- Implement robust Tool Interfaces.

- Architect Error Recovery Loops.

The code provided above is a seed. Your next step is to take that AutonomousDevOpsAgent class, connect it to a real Docker container, and let it try to solve a simple bug in a repository. Watch it fail, watch it reason, and watch it correct itself. That feeling—of watching software think—is the essence of engineering in 2026.

Next Steps:

- Replace the

MockGemini3Clientwith a real API call to OpenAI or Anthropic. - Implement a

ToolRegistrythat uses Pydantic to automatically generate JSON schemas. - Add a SQLite database to persist agent state across crashes.